The Quality Response Gap in Manufacturing

In discrete and process manufacturing, quality events are inevitable. Raw material variability, equipment drift, environmental changes, and human factors all introduce deviation. The issue isn’t that anomalies occur; it’s how long it takes to detect them, trace their impact, and initiate corrective action.

In most environments today, this cycle is measured in days, not minutes. A quality event is detected downstream, often after product has moved through multiple stages. An engineer investigates manually, cross-referencing batch records, production logs, and equipment data. Impact assessment requires tracing lot genealogy across lines and shifts. CAPAs are documented manually. Compliance reports assembled by hand.

The Cost of Delayed Response

Every hour in that cycle represents potential scrap, rework, customer impact, and compliance risk. In high-precision industries like semiconductor and aerospace manufacturing, unplanned downtime can cost upward of $1 million per hour. Even in less capital-intensive environments, the compounding cost (scrap, rework, customer claims, regulatory findings) adds up fast.

The AI in manufacturing market is projected to reach $155 billion by 2030, growing at over 35% annually. Quality management and predictive maintenance are the two leading adoption areas. The reason is straightforward: these are the use cases where ROI is most immediate and measurable.

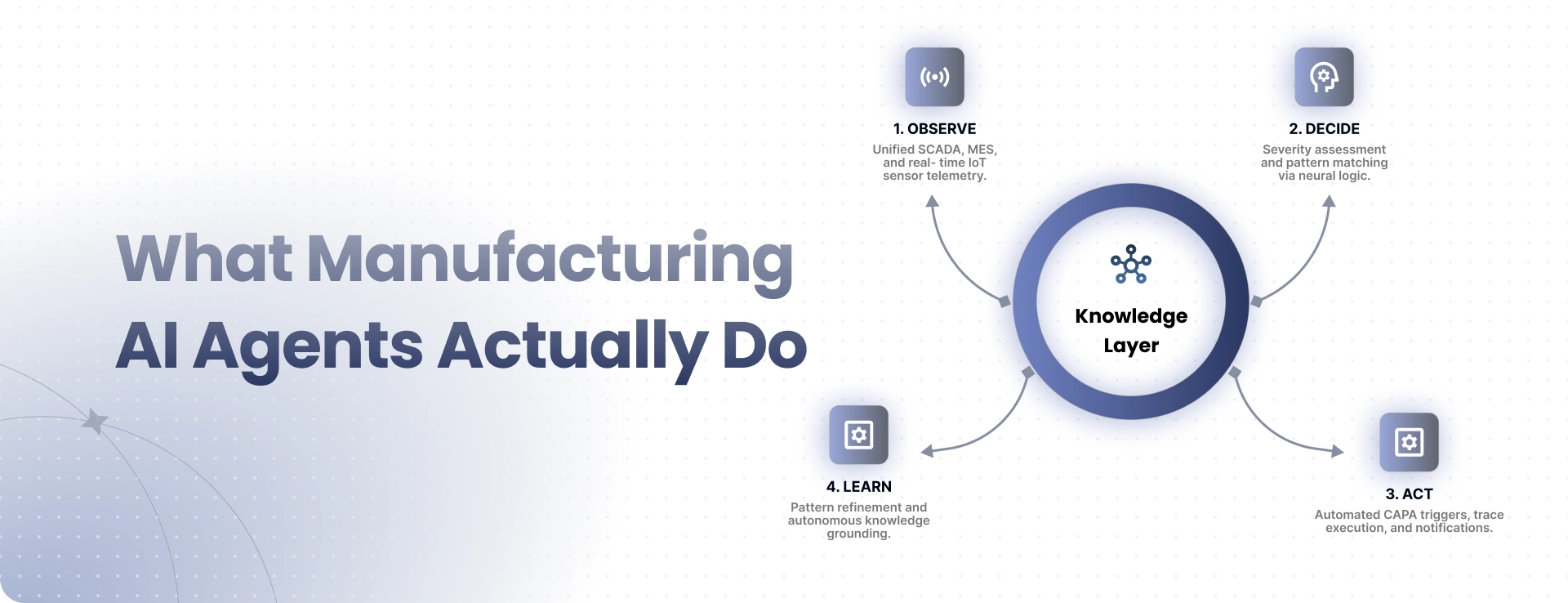

What Manufacturing AI Agents Actually Do

Agentic AI for manufacturing operates on the same core loop that drives all Tachyon Aura agents (Observe, Decide, Act, Learn) but applied to production-specific data streams and workflows.

Observe and Decide

Agents continuously ingest signals from MES, SCADA, IoT sensors, ERP quality modules, and historian databases. They monitor production parameters in real time: temperatures, pressures, cycle times, dimensional measurements, yield rates. They detect deviations from expected patterns before they become visible in traditional SPC charts. Unlike threshold-based alerting, agents build dynamic baselines per asset, per product, per production run, so they understand what “normal” looks like for your specific environment.

When an anomaly is detected, agents assess severity using historical patterns and production context. They determine whether the deviation is within acceptable tolerance, trending toward out-of-spec, or already a quality event requiring immediate action. This isn’t simple pattern matching; it’s contextual reasoning that accounts for product type, production stage, equipment maintenance history, and downstream impact potential. The agent can distinguish between a sensor drift that needs recalibration and a process shift that’s producing defective product.

Act and Learn

Based on the assessment, agents can automatically trace batch/lot impact through the genealogy tree, identifying every affected unit across production stages. They open a CAPA with pre-populated root cause analysis, notify relevant quality engineers with full diagnostic context, and begin generating compliance documentation. For approved actions, agents can adjust production parameters, quarantine affected lots, or halt a production run without waiting for human intervention.

Every quality event, whether agent-resolved or human-escalated, feeds back into the knowledge layer. Agents refine their detection models, improve their root cause correlation accuracy, and build an increasingly precise understanding of your specific production environment. The system gets measurably smarter with every event it handles.

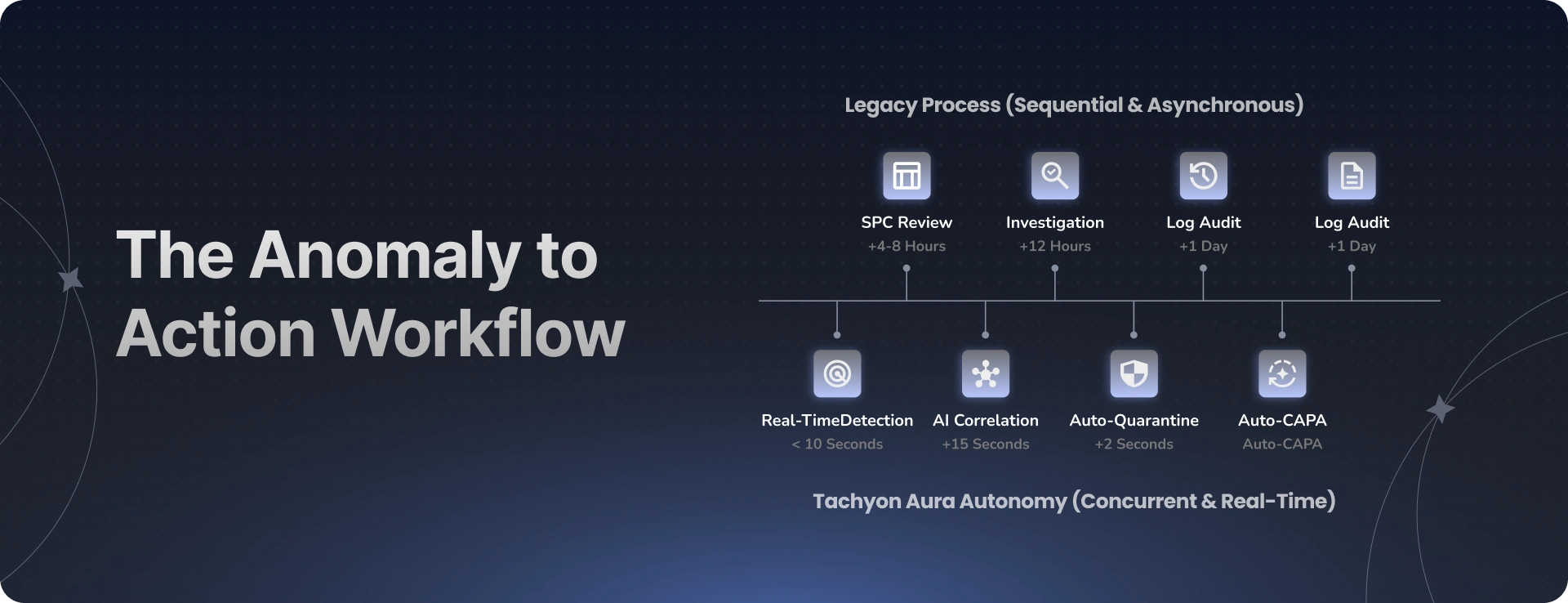

The Anomaly-to-Action Workflow

Consider a scenario in semiconductor manufacturing: a chamber temperature in a deposition process begins trending 0.3% above the control limit during a night shift.

In a traditional setup, the trend might not be flagged until the next shift’s SPC review, 8 to 12 hours later. By then, several wafer lots have been processed under suboptimal conditions. An engineer investigates, pulls chamber logs, reviews maintenance history, cross-references with recent process changes. Impact assessment takes hours as they trace which lots were in the chamber during the deviation window. The CAPA takes days to document. Total elapsed time from onset to containment: potentially 24 to 48 hours.

The Same Scenario Through Tachyon Aura

The agent detects the trend within minutes of deviation onset. It correlates the temperature shift with recent maintenance records and historical chamber performance data. It identifies that the deviation pattern matches a known precursor to a specific failure mode documented three months prior.

It quarantines the current lot, alerts the on-shift engineer with full diagnostic context (including the historical match and recommended action), opens a CAPA with pre-populated root cause analysis, and generates initial compliance documentation, all before the next lot enters the chamber. Elapsed time from detection to containment: minutes, not shifts.

Where the Data Comes From

Manufacturing AI agents are only as effective as the data they can access. Tachyon Aura integrates with the platforms that manufacturers actually run, not theoretical data sources, but the systems already generating data on your production floor:

MES platforms: SAP ME/MII, Dassault Apriso, Siemens Camstar, Critical Manufacturing. These provide production orders, routing information, in-process quality data, and the genealogy/traceability records that agents need to trace batch/lot impact. The MES is typically the richest source of production context.

SCADA and IIoT: Real-time sensor data from production equipment: temperatures, pressures, vibration, flow rates, dimensional measurements. These are the signals that enable real-time anomaly detection. The frequency and granularity of sensor data directly impact how early an agent can catch a deviation.

ERP quality modules: SAP QM, custom quality databases. These contain specifications, inspection plans, nonconformance records, and historical CAPA data. Agents use this context to assess severity and determine appropriate response actions.

Historian databases: OSIsoft PI, Wonderware. Time-series process data for trend analysis and historical pattern matching. When an agent detects a current anomaly, it queries the historian to find similar patterns and their outcomes, turning historical data into predictive intelligence.

This integration-first approach means deployment doesn’t require a data architecture overhaul. You don’t need a data lake project before you can deploy quality agents; you need accessible, reasonably clean data from your existing production systems.

Measurable Impact

Organizations deploying Aura’s manufacturing agents target impact across the KPIs that matter most to production leadership: downtime reduction, scrap reduction, yield improvement, and compliance documentation speed.

Industry benchmarks provide context for what’s achievable. AI-driven predictive maintenance has been shown to reduce unplanned downtime by 30 to 50% and lower maintenance costs by 25 to 40%. AI-powered quality inspection systems are delivering up to 40% less waste and 5 to 15% yield improvements through early defect detection and process correction. Manufacturers implementing these systems report 10 to 20% reductions in rework and scrap.

The Compounding Advantage

The value goes beyond immediate KPI improvement. As agents learn your production patterns, they catch deviations earlier, reduce the frequency of quality events over time, and build an increasingly accurate predictive model for your specific environment. The system doesn’t just react better; it prevents more.

For manufacturers in aerospace and defense, semiconductor, pharmaceutical, automotive, CPG, and chemicals (industries where Tachyon has deep domain experience) the combination of quality agents and production expertise creates a measurable competitive advantage.

Starting the Right Way

We structure manufacturing AI engagements as 4-week pilots. Week one captures your current baselines and identifies the highest-impact quality workflow. Week two stands up the agent with integration to your MES, SCADA, and quality systems. Week three adds runbook execution, enhanced detection rules, and reporting. Week four reviews real KPI movement against baselines and builds the scale plan.

The key to a successful manufacturing AI pilot is selecting the right starting point. We typically recommend beginning with a quality workflow that has three characteristics: high variability (meaning deviations occur frequently enough to train the agent quickly), documented impact costs (so ROI is measurable), and accessible data (MES and sensor data are flowing and reasonably clean). Don’t start with the most complex process in the plant; start with the one where agent value can be demonstrated most clearly within four weeks.

Conclusion

Manufacturing quality has always been about speed of response. The faster you detect a deviation, trace its impact, and initiate correction, the less it costs. Agentic AI compresses that cycle from days to minutes, not by replacing quality engineers, but by giving them an autonomous co-pilot that handles the detection, correlation, and documentation work at machine speed.

The manufacturers who deploy quality agents now won’t just reduce scrap and downtime in the short term. They’ll build a compounding data advantage that makes their operations measurably smarter with every production cycle. In a market where 76% of manufacturers are planning technology adoption and the AI manufacturing market is growing at 35% annually, early movers aren’t just gaining efficiency; they’re building competitive moats.

Ready to pilot AI agents on your production floor?

See how AI agents can detect quality anomalies early, trigger faster corrective action, and help manufacturers reduce waste, downtime, and costly defects before they escalate.